The Discrete Fourier Transform (DFT) is a basic algorithm for analyzing the frequency content of a sampled sequence. It’s form is adequate for direct numerical computation on a digital computer. However, the computation of the DFT is unnecessarily cumbersome for long sequences. There is another class of algorithms known as the Fast Fourier Transform (FFT) algorithms that are very efficient for computing the DFT. To demonstrate the computational shortcomings of the DFT consider its form:

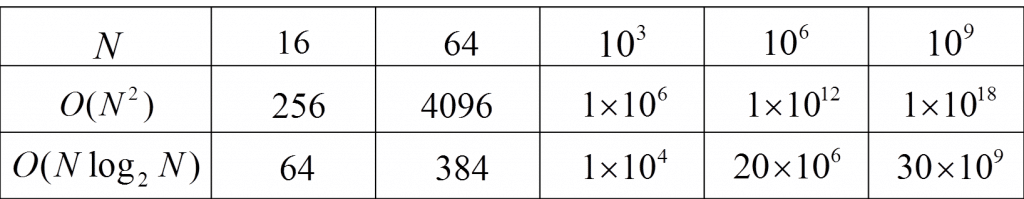

Here, each X(k) requires N complex multiplies and N-1 complex adds. This results in a total of O(N2) computations for the DFT, where each computation is one complex multiply/accumulate (MAC) calculation. Contrast this to the FFT, which requires only O(Nlog2N) operations (or computations). When using the DFT with short sequences, computation speed is not necessarily a problem, but for long sequences the computation time can become unwieldy. A comparison of the operations count for both algorithms is shown in the table below:

Operations count comparison for DFT vs. FFT.

If each MAC operation constituted 30 nanoseconds, then performing a DFT on a sequence of one billion samples would require (using Figure 1 above) 1018 operations. This means a total computation time of approximately 9.5 years, which is absolutely ridiculous! On the other hand, the FFT would require only 15 minutes for the same length sequence. For such large transform lengths, the savings in time is clearly significant.

The most widely known FFT algorithm is the Cooley-Tukey algorithm which recursively divides a DFT of size N into smaller sized DFTs of size N/2 in order to achieve the reduced computation time O(Nlog2N). The most common implementation of Cooley-Tukey is known as a radix-2 decimation-in-time (DIT) FFT. The algorithm first divides the DFT of a sequence x(n) into two parts, the odd elements, and the even:

A common multiplier of ![]() is then factored out of the second term:

is then factored out of the second term:

Therefore the two expressions above are now in the form of two N=2 point DFTs and can be written explicitly as a sum of even and odd terms:

The index k must extend to N-1, and using the periodic property of the even and odd DFTs it can be seen that:

The FFT also exploits the periodic nature of ![]() :

:

This allows the number of calculations involving ![]() to be cut in half and for 0 ≤ k ≤ N/2 The FFT is:

to be cut in half and for 0 ≤ k ≤ N/2 The FFT is:

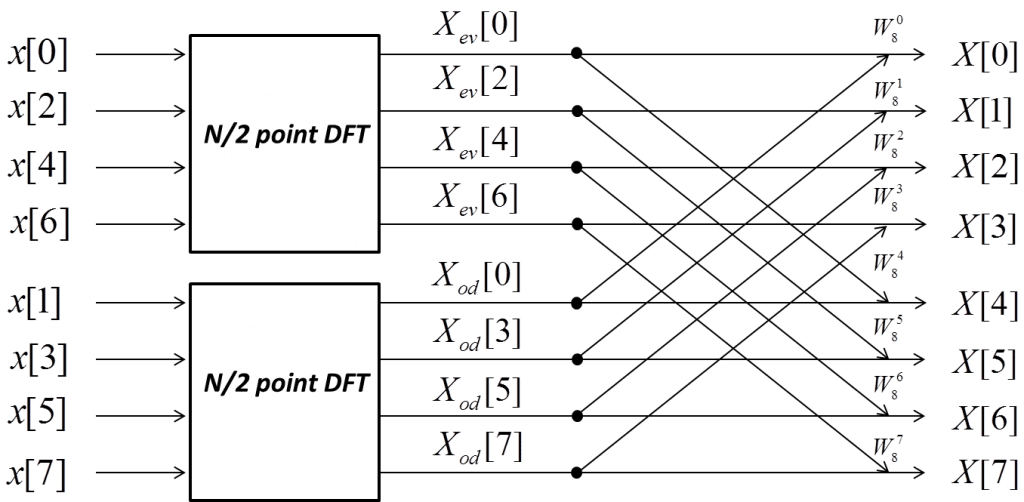

The process of re-expressing the DFT recursively in terms of two DFTS of size N/2 is the basis of the radix-2 decimation in time (DIT) Fast Fourier Transform. The method of recursively breaking down a problem into smaller problems of the same type is commonly known as ‘Divide and Conquer’. The block diagram below illustrates how an N point DFT can be split into two N/2 point DFTs to handle the even and odd values of a length 8 sequence. This splitting saves computation time and so the process would necessarily be continued. Therefore each N/2 point DFT can be divided into two N/4 point DFTs and so on.

Decimation in Time method of FFT

The radix-2 DIT FFT works by assuming that N is a power of two. This is only one of many variants of FFT algorithms. Some algorithms apply to prime types of numbers such as Bluestein’s or Rader’s algorithm. Other FFTs such as Bruun’s algorithm use a recursive polynomial-factorization method to derive the FFT.

FFTs are used extensively in digital signal processing for spectral analysis of signals. They are also used for filtering and processing signals in the frequency domain, which is often preferred over the time domain. The in depth use of FFTs is beyond the scope of this article, but includes among other things: vibrational analysis for heavy machinery, and harmonic distortion analysis for audio amplifiers.

More Information

References

P Embree, C Algorithms For Real-Time DSP (Upper Saddle River, NJ.: Prentice-Hall, 1995).

S Haykin and M Moher, Communication Systems, 5th ed. (New Jersey: John Wiley & Sons Inc., 2009).