A Hidden Markov model is an important tool in Acoustic Echo Cancellation (AEC) for detecting speech events. It can search the microphone signal during double talk for specific patterns observed in the far end speech and be used to detect unsuppressed speech events.

Hidden Markov Model

The Hidden Markov model is a discrete time bivariate random process {St, Yt} where St represents the unobservable Markov chain, and Yt represents the conditionally independent observations, also called the alphabet of the hidden markov model [1]. In other words, a Hidden Markov Model is a discrete Markov chain observed through a memoryless and time-invariant channel b(yt|st) where the small letter denotes a realization of its associated random process. For speech applications, autoregressive and mixture hidden markov models are often used. Given the observation vector ![]() we can represent the next observation as:

we can represent the next observation as:

Where the ai are the AR coefficients given by the auto-correlation vector aim and eK is additive white noise. This model can be improved by considering what is called a mixture process. In speech, a single vowel may be voiced differently depending on the speaker’s prosody. In a hidden markov model, this may give rise to different observation probabilities for the same vowel, where in reality all of these observations represent the same speech event and thus should give rise to a single density. To create such a density is to create a mixture process, defined as the cartesian product of the K dimensional observation spaces of each incarnation of the vowel

Baum-Welch Algorithm

To describe a hidden markov model [2], one needs to estimate the underlying markov chain, and have a good representation of the channel through which it is observed. The alphabet’s probability distribution when the chain is in state sj is given by:

Which tells us that we can parameterize the process as λ = (A,B, π) where A is the state transition matrix, B is the alphabet probability distribution matrix, and π is the model’s initial state. If ![]() is the optimum estimate of λ then we can define the Q-function, designed to simplify the estimation problem as:

is the optimum estimate of λ then we can define the Q-function, designed to simplify the estimation problem as:

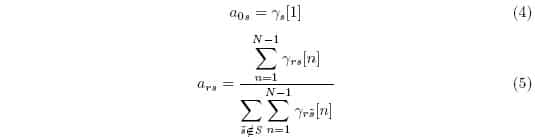

Essentially, the estimation problem comes down to finding the argument that maximizes Q. Then for a general distribution, we can estimate the transition matrix entries as:

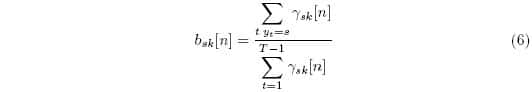

Where γ is the a-posteriori probability defined as ![]() . Then the observation probabilities are:

. Then the observation probabilities are:

Often, Q has only one critical point, thus since by iterating these approximations we get ![]() we will eventually find the optimum parameter set. By initializing the problem with many different guesses, speed can be improved.

we will eventually find the optimum parameter set. By initializing the problem with many different guesses, speed can be improved.

Speech Recognition

In general, hidden markov models are useful for speech recognition as knowledge to be used by the control logic of the echo canceller. Hidden markov models can detect speech events [3] for acoustic echo cancellation. In double talk scenarios, a hidden markov model can search the microphone signal for specific patterns first seen in the far end speech. In echo suppression, they can detect speech events that have gone unsuppressed.

In general [4], speech recognition in a hidden markov model starts by maximizing ![]() for λ when the observation vector is the target speech sound. From this maximization, the next step is to determine the best corresponding state sequence that explains the observations. This can often be difficult, and require many refinements in the size of the transition matrix itself. Once a suitable model has been found, all of the observed sequences are tested against this model, and the one with the highest liklihood is the sequence that the hidden markov model recognizes as the target.

for λ when the observation vector is the target speech sound. From this maximization, the next step is to determine the best corresponding state sequence that explains the observations. This can often be difficult, and require many refinements in the size of the transition matrix itself. Once a suitable model has been found, all of the observed sequences are tested against this model, and the one with the highest liklihood is the sequence that the hidden markov model recognizes as the target.

References

[1] Y. Ephraim, N. Merhav, “Hidden Markov Processes” IEEE Transactions on Information Theory, vol. 48, no. 6, June 2002.

[2] A. B. Portiz, “Hidden Markov Models: A Guided Tour” International Conference on Acoustics, Speech, and Signal Processing, April 1998

[3] C. Carlemalm, “On detection of double talk and changes in the echo path using a Markov modulated channel model” IEEE International Conference on Acoustics, SPeech, and Signal Processing, April 1997.

[4] L. R. Rabiner, “A tutorial on hidden Markov models and selected applications in speech recognition”, Proceedings on the IEEE, vol. 77, no. 2, Feb 1989.