What is a Dynamic Time Warping algorithm?

The Dynamic Time Warping Algorithm (DTW) is a time series alignment algorithm developed originally for tasks related to speech recognition. It aims at aligning two sequences of feature vectors by warping the time axis iteratively until an optimal match (according to a suitable metrics) between the two sequences is found.

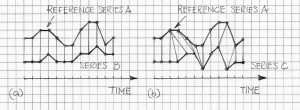

Metrics used for measuring the “distance” between the two time series include, but not limited to, Euclidean, Manhattan, to mention a few (Ref.[1]). Figure 1 illustrates conceptually two time series that require suitable alignment, according to the pre-selected distance definition between them.

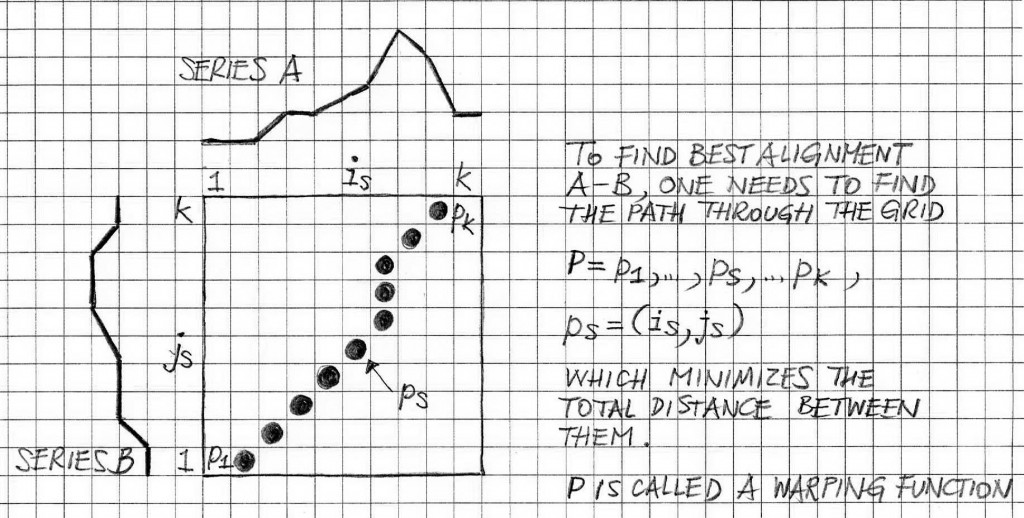

Figure 2 illustrates the concept of a warping function. The points of the grid are ps=(is,js), s=1,2, …,k. The total distance between A and B is

where d(ps) is an individual distance between corresponding elements of series A and B.

Dynamic time warping can be used in aligning audio streams that became misaligned due to limitations of the data acquisition systems. Ref.[2] discusses the question of aligning two audio streams using a short-duration sliding window and processing data within the boundaries of the sliding window via cross-correlation function. Thus, in this case, the individual distance between two series (or audio streams) for the given position of the sliding window can be measured using the normalized max value of the cross-correlation function.

Other approaches to aligning the audio streams may include computing cross-correlation functions for the audio signal envelopes (as opposed to the audio signal waveforms). The advantage of replacing cross-correlations of corresponding audio signals by the cross-correlations of the signal envelopes is that computations are less intensive thanks to opportunities to downsample envelopes as their frequency band is significantly narrower than the one of audio signal waveforms.

VOCAL’s Voice Enhancement solutions’ practices include analyses and verification of laboratory audio data using various DSP-based techniques such the ones discussed in this note.

REFERENCES

- Dynamic Time Warping Algorithm, by Elena Tsiporkova (University of Ghent); a 2003 report – courtesy of Emory University

- Aligning Audio Streams Captured using Unsynchronized Clocks