Time Delay Estimation

In signal processing, we often encounter problems that the time delay of a signal is required to perform certain operation. The signal model is usually modeled as below,

The pure delay term, , can be used for many applications such as system diagnosis, radar ranging, direction of arrival, velocity measurement etc. More generally, we may model multipath channel as below,

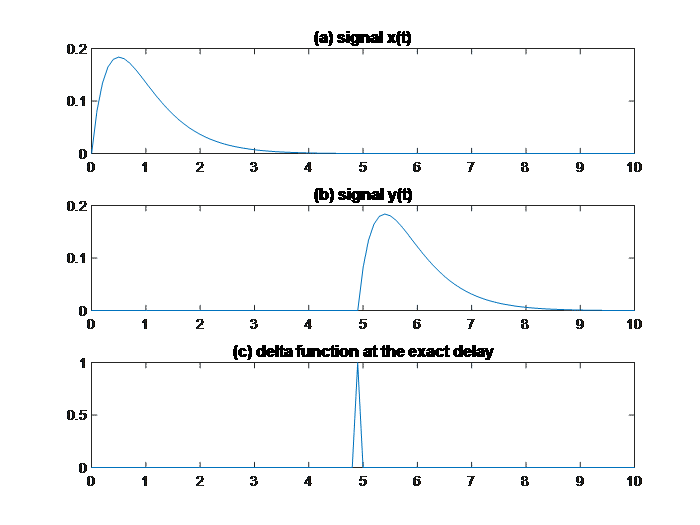

In this paper we only consider algorithms that can handle time delay being fractional. The problem can be formulated as below. The short duration signal shown in Figure 1 a) is the generating signal , and Figure 1, b) is the delayed signal

.

The signal and

can be mathematically connected by an impulse function, as shown in Figure 1 c), through convolution,

In reality, may or may not be available. Our goal is to recover the impulse response function

.

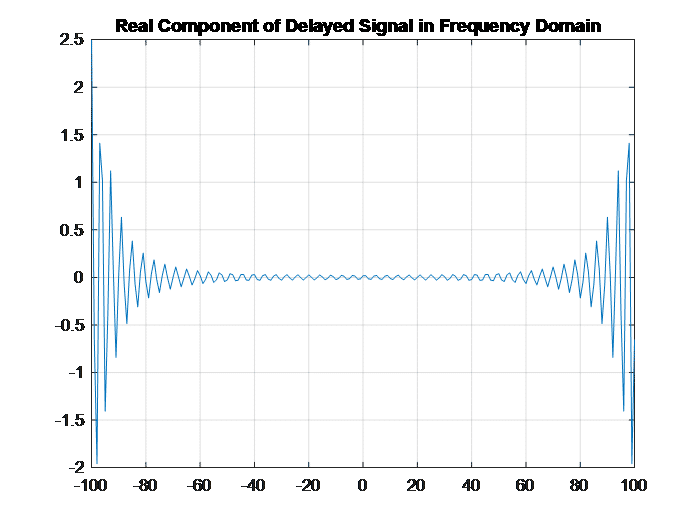

We ignore the noise term for simplicity. In frequency domain,

and if we take the real component only,

Figure 2 shows the sinusoidal nature of the delayed signal in the frequency domain. Naturally one more layer of frequency transform will yield the period which is the delay we desire. However, due to the dramatic variation of frequency domain representation, we may apply logarithm operation before converting to the second layer frequency domain. The same practice is often used in cepstral analysis.