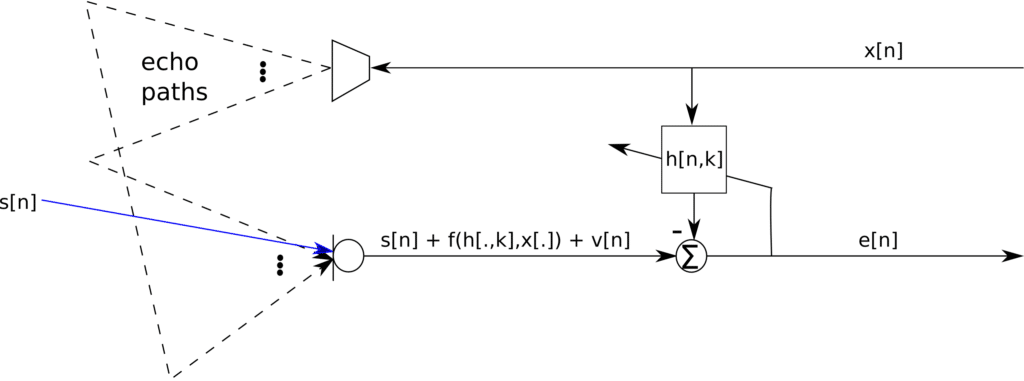

Typical algorithms for acoustic echo cancellation (AEC) are the LMS and NLMS algorithms for their ease of implementation and relatively fast convergence rates, especially for real time implementations. The filter taps obtained from the NLMS, and by extension the LMS algorithm, is however not the maximum likelihood estimate. We derive the maximum likelihood estimate and compare to the NLMS and the LMS algorithms. Consider the systems depicted in Figure 1 below:

Figure 1: Single line AEC architecture

The problem at hand is to correctly estimate the filter taps, such that , to minimize the error. The presence of the signal

may cause the adaptive system to cancel out the speech signal, thus leading most AECs updating the filter coefficients only when there is no speech detected. Now consider a received signal

where is additive noise. Also consider that

is known. We want to estimate

such that

is minimized, where

Consider the noise signal being i.i.d. Gaussian, then the likelihood function, the joint pdf, for a frame of length N can be given as

where ,

and

. The log-likelihood function then becomes

where is a constant term. The maximum value of the log-likelihood function will obey:

Thus the ML algorithm will proceed as:

Comparing the ML update equation to the NLMS algorithm update which is reproduced below,

it can be seen that there is a change of to

and a reintroduction of the step size

. The popularity of the NLMS is precisely because of the availability of

. However, given a frame size of length

, the variance of the noise could easily be estimated. Notice that the variance of the noise is the same as that of the recorded signal

and if given that

is zero mean, then the variance can be estimated directly from

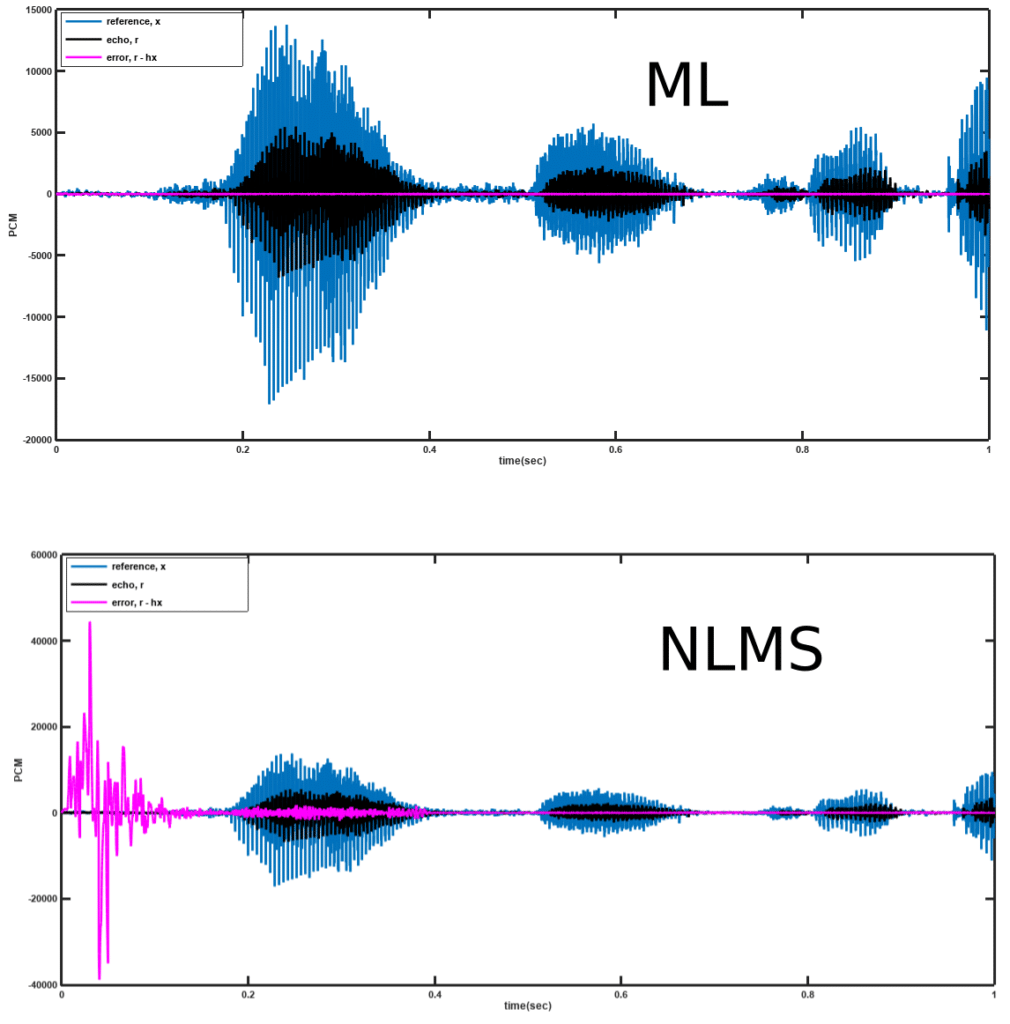

. A sample result for this algorithm is shown in Figure 2 below with comparison with the NLMS algorithm.

Figure 2: Performance of ML AEC

VOCAL Technologies offers custom designed solutions for beamforming with a robust voice activity detector, acoustic echo cancellation and noise suppression. Our custom implementations of such systems are meant to deliver optimum performance for your specific beamforming task. Contact us today to discuss your solution!