Speech recognition uses specific characteristics of the sound being captured. When a speech signal is present within a captured signal, these characteristics in both the time and frequency domain can be used to determine what words are being spoken. Intelligible speech can be broken up into phonemes, which are the smallest distinctive segment of sounds which brings about a change of meaning in words. Phonemes can be classified as vowels and consonants, and can be analyzed to determine which word is being spoken. Many speech recognition systems use vast libraries of these phonemes and their transitions in order to make sense of spoken sounds.

Speech Composition

Using digital signal processing one can take a time varying signal x(t) and view the frequency spectrum X(f) of that signal using a Fourier transform. The resulting frequency domain signal can now be used in a variety of ways to classify and discern the speech. Although entire speech signals can be converted and processed, it is often easier to break the time-domain signal into smaller frames that can be processed as individual phonemes. Some issues that arise when doing so are the use of unstable frames, discontinuous frames, and smooth over within the individual frames. Assuming that there are no issues with frame selection, the entire spoken signal can be broken down into a collection of phonemes, each with their own Fourier transform.

Describing Vowels

To get a better sense of how speech can be recognized, it is important to know how phonemes are physically formed. Vowels are created by the vibration of the vocal cords. There, sound emitted by the vocal cords has resonance frequencies that are based on the size and shape of the vocal tract. These resonance frequencies are specific for each vowel and are on the low part of the frequency spectrum. The tongue is the most important component for creating vowels and is based on its front/back and high/low positions within the mouth. Some vowels, such as [i] and [u] are created with the tongue being close to the roof of the mouth, while others, such as [a], are created with the tongue being furthest away from the roof of the mouth. Height of the tongue is classified as being high, mid, or low and the front/back dimension is classified as being front, central, or back.

Vowels can also be described based on how centralized the tongue is. Some pairs of vowels, such as [i]/[I] and [e]/[ϵ], can be referred to as tense or lax. A tense vowel is produced when the tongue is higher and less centralized than its lax counterpart. The following vowels are tense: [i], [e], [u], [o] and the following vowels are lax: [I], [ϵ], ![]() . When enunciating vowels, the shape of the lips is also important. The following vowels are rounded: [u],

. When enunciating vowels, the shape of the lips is also important. The following vowels are rounded: [u], ![]() , [o]. When saying these vowels, your lips make a circular shape. For other vowels, [i], [e], [ϵ], [æ], the lips are not rounded and are spread.

, [o]. When saying these vowels, your lips make a circular shape. For other vowels, [i], [e], [ϵ], [æ], the lips are not rounded and are spread.

Describing Consonants

Consonants are more complicated than vowels and often come from multiple sources. Vowels are composed of only voiced components whereas consonants can be voiced or unvoiced. Consonants are formed by of the vocal tract at various points, referring to the place of articulation. Along with being voiced or unvoiced and the place of articulation, the manner of articulation is crucial to differentiating and creating different consonants.

Voicing

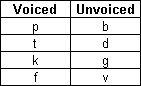

Consonants can be classified as being either voiced or unvoiced. Sounds that are made when the vocal fold is vibrated are said to be voiced, while those that do not involve vibration are said to be unvoiced. Nearly all voiced consonants have a similar unvoiced sound that is equivalent in the manner and place of articulation. Some pairs of voiced and unvoiced consonants can be seen below:

Figure 1: Some Voiced and Unvoiced Examples

Place of Articulation

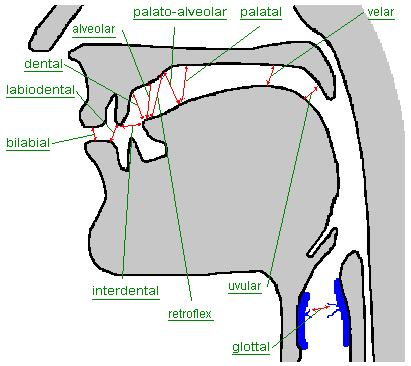

The vocal tract refers to the part of the body used for sound production. For humans, the vocal tract runs from the lips to the vocal cords and contains the pharynx, mouth, and nasal cavities. Using the various components of the vocal tract, including the teeth, lips, and tongue, to constrict air flow results in the production of different consonants. Some examples of places of articulation are bilabial, labiodental, dental, alveolar, postalveolar, retroex, palatal, velar, and glottal. By changing the resonances in vocal tract, different sounds can be created. For instance, when the two lips are the articulators, or movers during the sound creation, the place of articulation is known as bilabial. The following diagram [2] shows a few examples of where the places of articulation are located:

Figure 2: Places of Articulation [2]

Manner of Articulation

The manner of articulation essentially describes how the sound is created. There are five basic methods that accomplish the production of consonants: stops, fricatives, approximants, affricates, and laterals. Stops are used to cut off the air completely from passing through the vocal tract. Stops are classified as being nasal stops (nasals) or oral stops (plosives). Some plosives include /t/ and /d/, while an example of a nasal is /n/. Fricatives are constrictions that come close to a stop but don’t completely touch. This results in turbulent air flowing through the small gap and can produce consonants including /f/, /v/, /s/, and /z/. Approximants are less constrictive than fricatives and do not create the turbulence that fricatives do. Affricates contain both a stop and a fricative in that order. After a brief stop, the tongue slowly pulls away to create a small gap and some air turbulence. The final manner is known as a lateral and is much less common than the others. Laterals pass air around a stop, such as through the sides of the tongue as in an /l/.

Frequency Characteristics of Phonemes

Once the incoming speech signal is converted to the frequency domain using a transform like the Fourier transform, the specific frequency characteristics can be taken advantage of. Looking at speech signals that contain only a signal phoneme provides the basis for speech recognition. An individual phoneme is composed of many different frequencies, but some specific frequencies are more present than others.

The most important frequencies, or formants, are present in high energy throughout most or all of the speech. Formants are represented by peaks in the frequency domain, denoting that there is a lot of energy present at that particular frequency, or band of frequencies. Physically, the formants are directly related to the resonance frequency of the articulation. As described earlier, the place, manner and method of articulation all define what the resonance frequency is. Looking at an example frequency spectrum, one can pick out the formants of speech, with the first formants being most important for classification. The following plot shows the frequencies present throughout [a]:

Figure 3: Frequency Spectra of [a] [4]

Very high levels of energy are focused in the lower frequencies, but some peaks can be seen around 2500 Hz which represents a later formant. Each phoneme has a specific set of formants that can be used to determine its presence within a speech signal. The following plot shows the frequency spectra of [i]:

Figure 4: Frequency Spectra of [i] [4]

Unlike [a], [i] does not have a second formant very close to it’s first formant. Here we see that the first formant is around 280 Hz while the second formant is around 2230 Hz. This is due to the position of the tongue within the mouth, as well as the shape of the lips. The first formant is determined by the height of the tongue, where a high frequency refers to a low tongue and a low frequency refers to a high tongue. The frequency of the second formant is mainly due to the front/back quality of the tongue, where a high frequency corresponds to a front tongue and a low frequency corresponds to a back tongue. Viewing more examples within the spectrogram, which shows time vs frequency with the level of energy present at any point, one can see how different formants become visible:

Figure 5: Vowel Spectrogram Examples [5]

The spectrogram above shows how the formants show up as dark bands. Speech recognition algorithms determine the phonemes from the resulting for- mants, which in turn leads to recognition of what is being spoken. Consonants are more complicated than vowels in terms of the spectrograms produced as very often they cannot be determined simply from the presence of a set of formants. For fricatives, which produce turbulent air flow, inherently produce bursts of energy at random frequencies. This results in different sized bands with differing average frequencies, which can be used for identification. The following spectrogram shows various examples of fricatives:

Figure 6: Fricative Spectrogram Examples [5]

The large frequency bands present represent the burst of air and are seen to be centered around different average frequencies. Some fricatives have nearly constant energy for all frequencies. When a voiced component is introduced to the fricative, low frequency formants also become present:

Figure 7: Voiced Fricatives Spectrogram Examples [5]

The previous two figures show examples of the consonants described in Figure 1, which shows some of the voiced and unvoiced pairs. As you can see, burst of frequency is still present and centered around the same average, but this time, there are also low frequency formants present. Just like vowels, the formants are mainly a result of tongue position and can be used to differentiate between consonants.

Determining each phoneme present in a speech signal requires knowledge of what that phoneme should look like. Some speakers sound differently than others, so not all phonemes will have the same frequency response. Therefore, it is important to not look for exact matches but rather the most likely match. Knowing how the individual phonemes are created gives us a better understanding of what the frequency response should be like. Once the phonemes are determined and along with the transitions present, the speech can be recognized.

References

[1] K. Russell. (2005, November 27). Describing Consonants. [Online] Available: http://home.cc.umanitoba.ca/krussll/phonetics/index.htm

[2] J. Bickford and D. Tuggy. (2002, April) Places of Articulation. [Online] Available: http://www.sil.org/mexico/ling/glosario/E005ci-PlacesArt.htm

[3] R. Klevans and R. Rodman, Voice Recognition. Norwood, MA: Artech House, Inc., 1997

[4] K. Russell. (2005, November 27). Formants. [Online] Available: http://home.cc.umanitoba.ca/krussll/phonetics/acoustic/formants.html

[5] K. Russell. (2005, November 27). Identifying Sounds in Spectrograms. [Online] Available:

http://home.cc.umanitoba.ca/krussll/phonetics/acoustic/spectrogram-sounds.html

![ah Figure 3: Frequency Spectra of [a] [4]](https://vocal.com/wp-content/uploads/2013/02/ah.png)

![i Figure 4: Frequency Spectra of [i] [4]](https://vocal.com/wp-content/uploads/2013/02/i.png)

![ieea Figure 5: Vowel Spectrogram Examples [5]](https://vocal.com/wp-content/uploads/2013/02/ieea.png)

![fricatives Figure 6: Fricative Spectrogram Examples [5]](https://vocal.com/wp-content/uploads/2013/02/fricatives.png)

![voiced-fricatives Figure 7: Voiced Fricatives Spectrogram Examples [5]](https://vocal.com/wp-content/uploads/2013/02/voiced-fricatives.png)